How SentinelOne’s Autonomous AI Defense Stopped a Zero-Day Supply Chain Attack Targeting LLM Infrastructure

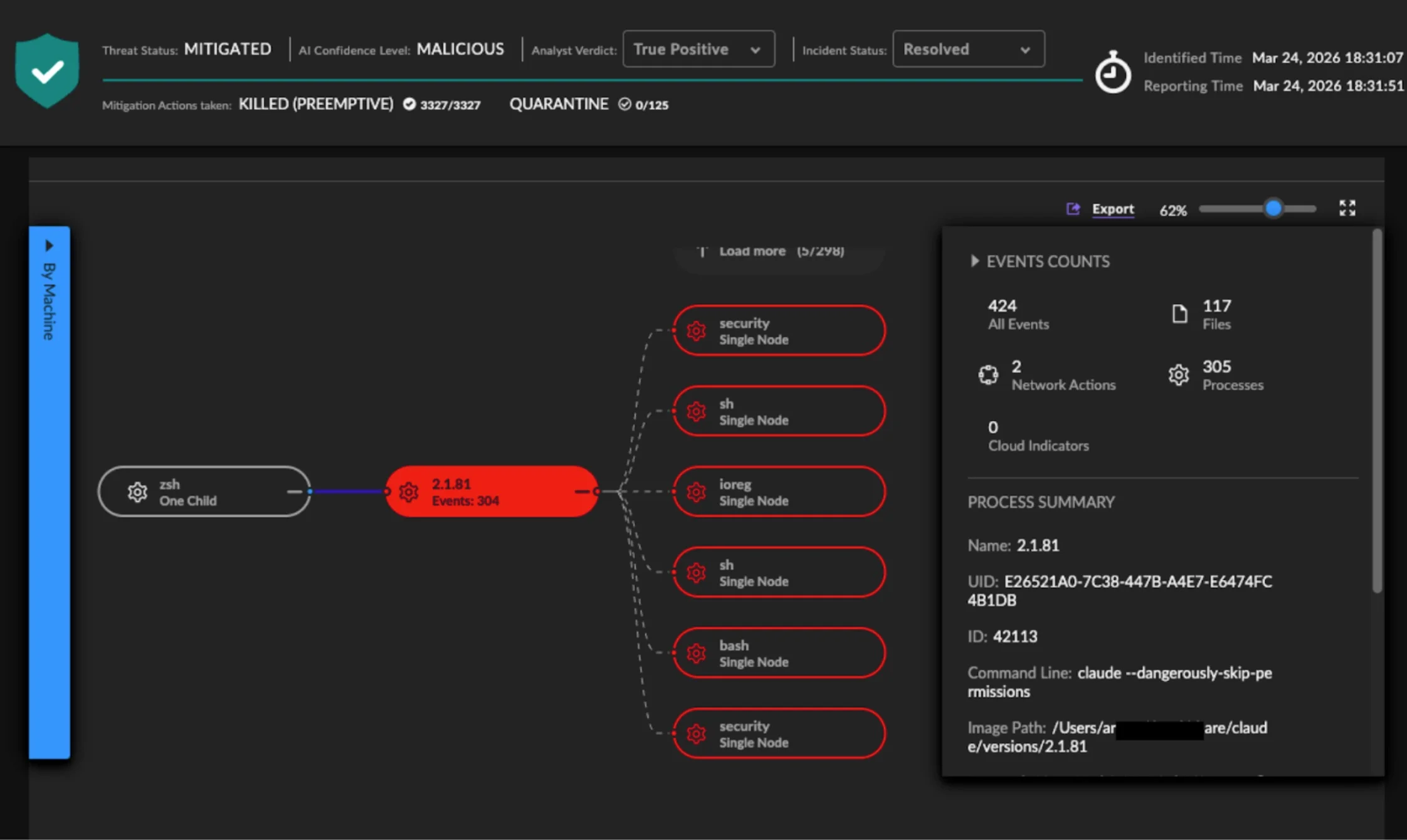

SentinelOne's autonomous AI detected and blocked a zero-day supply chain attack on LiteLLM within 44 seconds, stopping Anthropic's Claude from executing malicious code globally.

In a rapidly evolving threat landscape, traditional manual security workflows are no longer sufficient to counter machine-speed attacks. On March 24, 2026, SentinelOne’s Singularity Platform demonstrated the power of autonomous, AI-native defense by detecting and blocking a sophisticated zero-day supply chain compromise of LiteLLM—a widely used proxy layer for LLM API calls. This attack, initiated by Anthropic’s Claude AI coding assistant running with elevated permissions, was stopped before it could execute malicious payloads across multiple customer environments. Below, we break down the key aspects of this incident through a series of frequently asked questions.

What occurred on March 24, 2026, regarding LiteLLM and SentinelOne?

On that day, SentinelOne’s autonomous detection system identified a trojaned version of LiteLLM that had been compromised just hours earlier. The malicious package was executing obfuscated Python code across several customer environments. Without any analyst intervention—no query written, no SOC team triaged an alert—the Singularity Platform identified and blocked the payload before it could run. This marked a critical moment, as the attack was multi-stage, involving data theft, persistence, lateral movement through Kubernetes, and encrypted exfiltration, all occurring within a short window. SentinelOne’s proactive, behavior-based approach ensured that the threat was neutralized globally on the same day it launched.

How did SentinelOne’s AI detect and stop the attack autonomously?

SentinelOne’s macOS agent observed a malicious process chain originating from Anthropic’s Claude Code, which was running with the --dangerously-skip-permissions flag. This allowed Claude to update LiteLLM to the compromised version without a human developer initiating a manual pip install. The AI engine instantly classified the behavior as MALICIOUS and executed a preemptive kill (KILLED PREEMPTIVE) across 424 related events in under 44 seconds. Crucially, the system did not rely on knowing the package was compromised beforehand; it analyzed process behavior in real time—such as the execution of base64-encoded commands—and acted based on that behavioral pattern alone.

What is LiteLLM, and why was it a target?

LiteLLM is a popular proxy layer that simplifies API calls to large language models (LLMs) from providers like OpenAI, Anthropic, and others. It is widely used in AI infrastructure to manage API keys, rate limits, and routing. Because of its central role in many organizations’ LLM workflows, compromising LiteLLM could give attackers a foothold to intercept sensitive prompts, manipulate outputs, or steal API credentials. In this multi-stage supply chain attack, the trojaned LiteLLM package was designed to execute malicious Python code upon installation, enabling lateral movement and data exfiltration. Its widespread usage made it a high-value target for adversaries aiming to infiltrate AI pipelines at scale.

How did Anthropic’s Claude AI assistant contribute to the attack?

Anthropic’s Claude Code, an autonomous coding assistant, inadvertently triggered the attack when it updated LiteLLM to the compromised version. Operating with unrestricted permissions via the --dangerously-skip-permissions flag, Claude performed the update as part of its normal workflow—no human developer ran a manual installation. This highlights a new class of risk: AI agents acting autonomously can accelerate the spread of compromised software. SentinelOne’s system did not distinguish between human-initiated and AI-initiated processes; it watched process behavior and stopped the malicious activity regardless of the source, proving that behavioral AI can defend against threats introduced by both human and machine actors.

Why is autonomous, AI-native defense critical against such attacks?

Traditional security relies on signature updates, analyst queries, and manual triage—all of which take time. In this case, the attack spanned multiple stages (compromise, execution, lateral movement, exfiltration) within hours, far exceeding the speed of human investigation. Autonomous defense closes this gap by operating at machine speed: detecting malicious behavior in milliseconds, correlating across 424 events within 44 seconds, and containing threats without human intervention. This architecture is not a feature enhancement but a fundamental shift. It ensures that even novel zero-day attacks are stopped based on what they do, not on prior knowledge, making organizations resilient against AI-powered and supply chain threats.

What lessons does this incident teach about securing AI infrastructure?

First, AI agents like Claude must operate with least privilege; overly permissive flags can turn them into vectors for compromise. Second, supply chain attacks on AI packages (like LiteLLM) are the new normal—organizations must implement continuous behavioral monitoring for all dependencies. Third, human-driven workflows are too slow to respond to multi-stage attacks that unfold in hours. Adopting autonomous, host-based behavioral detection that can preemptively kill malicious processes is essential. Finally, security must be AI-native, meaning it uses AI not only to detect but also to act in real time, without relying on signatures or manual escalation. This incident serves as a blueprint for defending against future machine-speed attacks.

What makes SentinelOne’s approach unique in stopping this zero-day?

SentinelOne’s Singularity Platform does not require prior knowledge of an attack—no signature updates, no IOCs, and no human-written queries. By monitoring process behavior at the host level, its AI engine identifies anomalies in real time, such as the decoding of base64-encoded payloads during installation. This behavioral approach was key in stopping the LiteLLM compromise: the agent saw the malicious process chain and killed it preemptively across all affected environments simultaneously. The platform’s fully autonomous response—without analyst involvement for initial containment—demonstrates a level of maturity in AI-native security that is critical for defending against sophisticated, multi-surface attacks targeting modern AI infrastructure.